Meta Orion: The Glasses That Will Enable American Autonomous Manufacturing

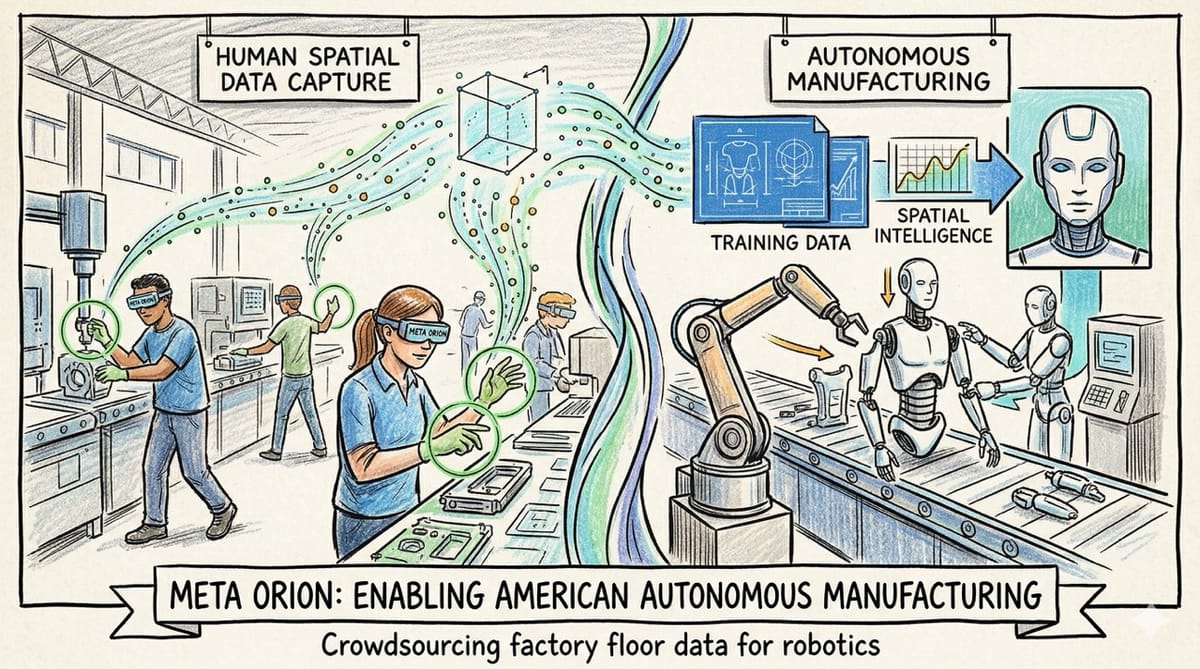

We assume augmented reality glasses are just another consumer gadget for reading text messages. In reality, Meta Orion is quietly laying the foundational training data required to build a massive, autonomous American robotic workforce.

The race to build humanoid robots is currently bottlenecked by a lack of real world training data. Meta is about to solve this by crowdsourcing perfect, human-labeled spatial data directly from the factory floor.

Inspiration: Analyzing the strategic launch of the Meta Orion glasses and cross-referencing it with Dr. Fei-Fei Li's recent podcast regarding why large language models struggle with spatial intelligence. Realizing that augmented reality hardware is actually the ultimate Trojan horse for collecting the physical data required for autonomous robotics.

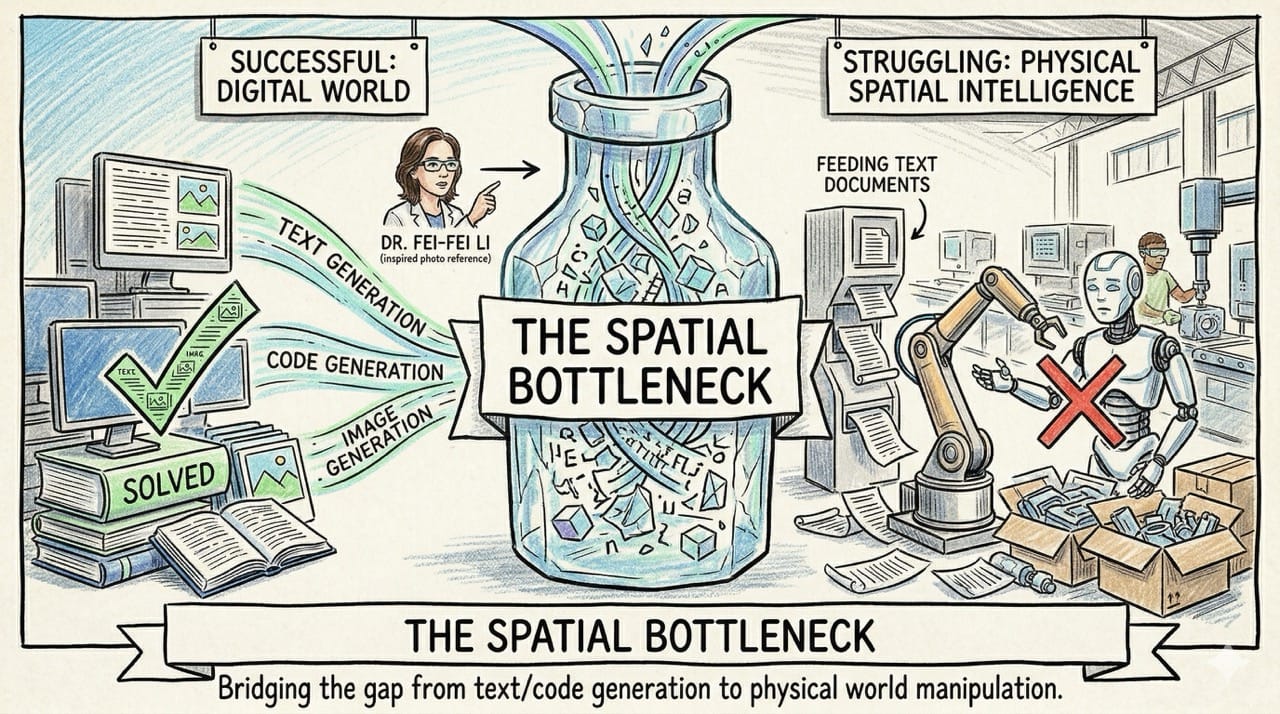

The Spatial Bottleneck

The artificial intelligence industry has successfully solved the complex generation of text, code, and images.

However, as Dr. Fei-Fei Li recently highlighted, these models still severely struggle with actual spatial intelligence in the physical world.

You cannot easily teach a humanoid robot how to weld a joint or pack a shipping box strictly by feeding it text documents.

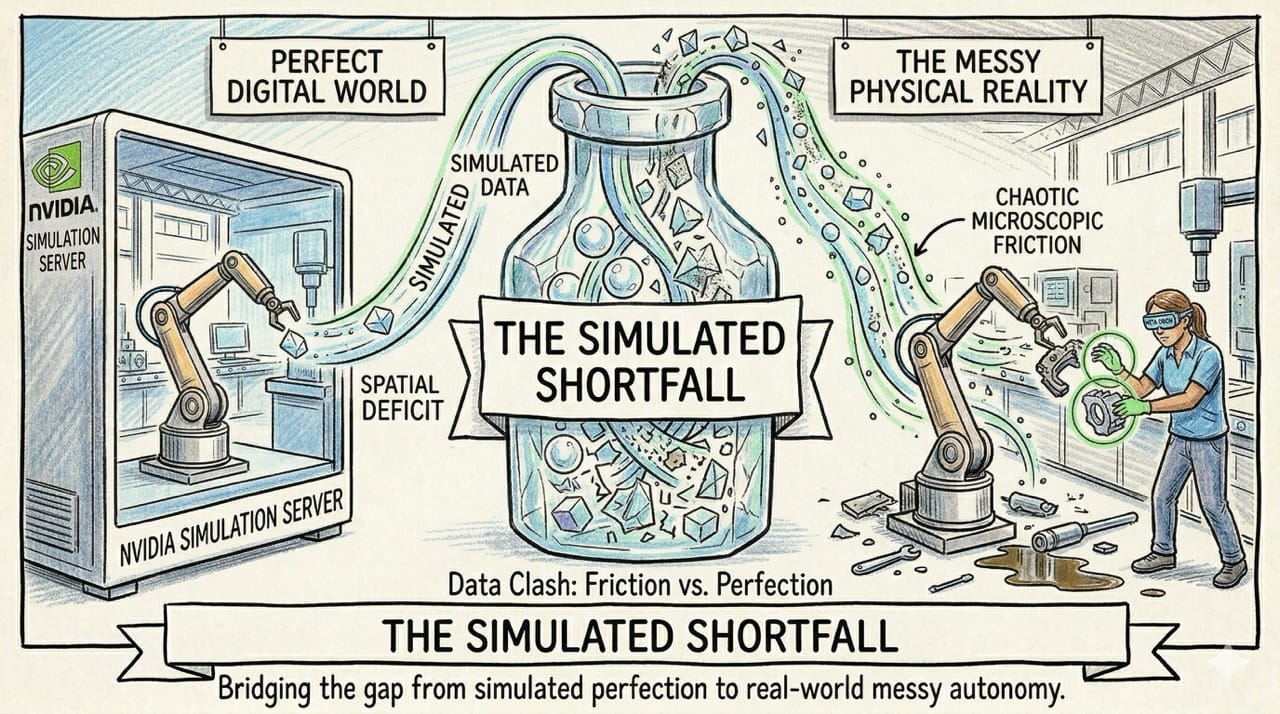

The Simulated Shortfall

Companies like Nvidia are currently attempting to solve this spatial deficit by training robotic models inside massive simulated software environments.

While simulated data is helpful, it consistently fails to capture the chaotic, microscopic friction of the real physical world.

True robotic autonomy requires massive datasets recorded directly from the actual, messy point of view of a human worker.

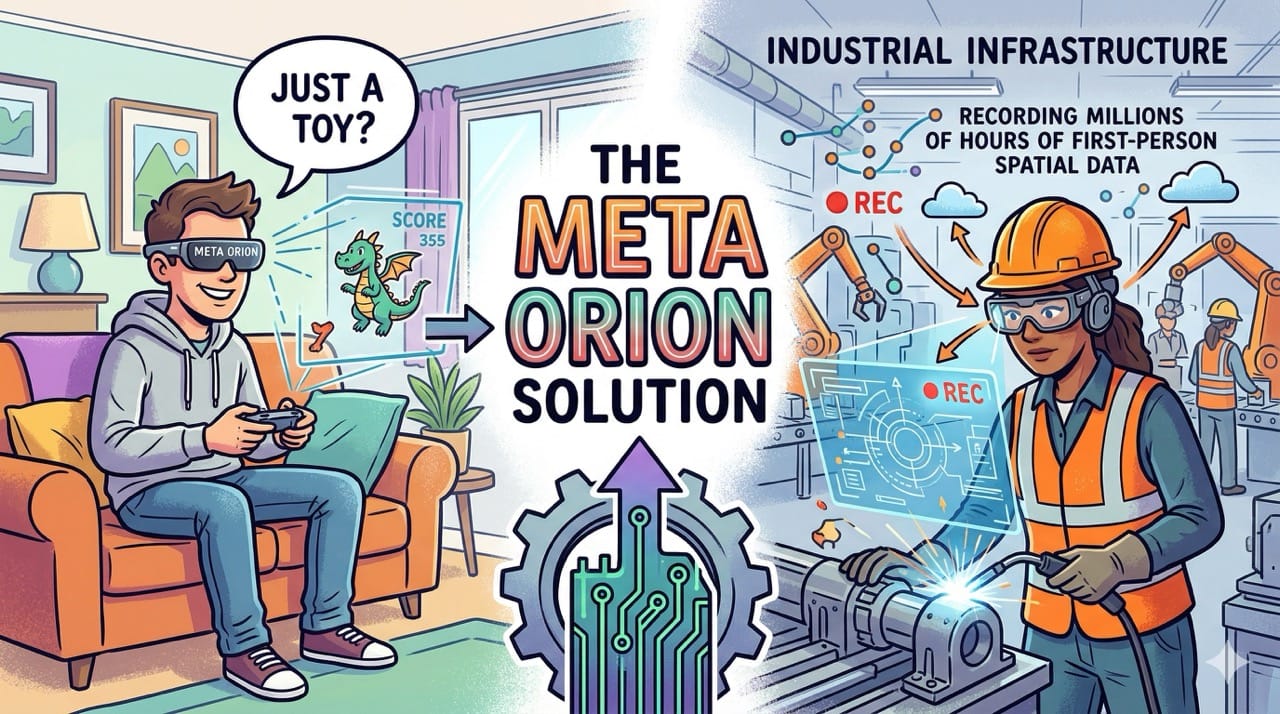

The Orion Solution

This is precisely where the Meta Orion augmented reality glasses transform from a consumer toy into industrial infrastructure.

Factory workers are already highly accustomed to wearing protective eyewear or corrective prescription glasses during their shifts.

Outfitting the American manufacturing workforce with augmented reality glasses provides an immediate, frictionless method to record millions of hours of perfect, first-person spatial data.

Immediate Human Leverage

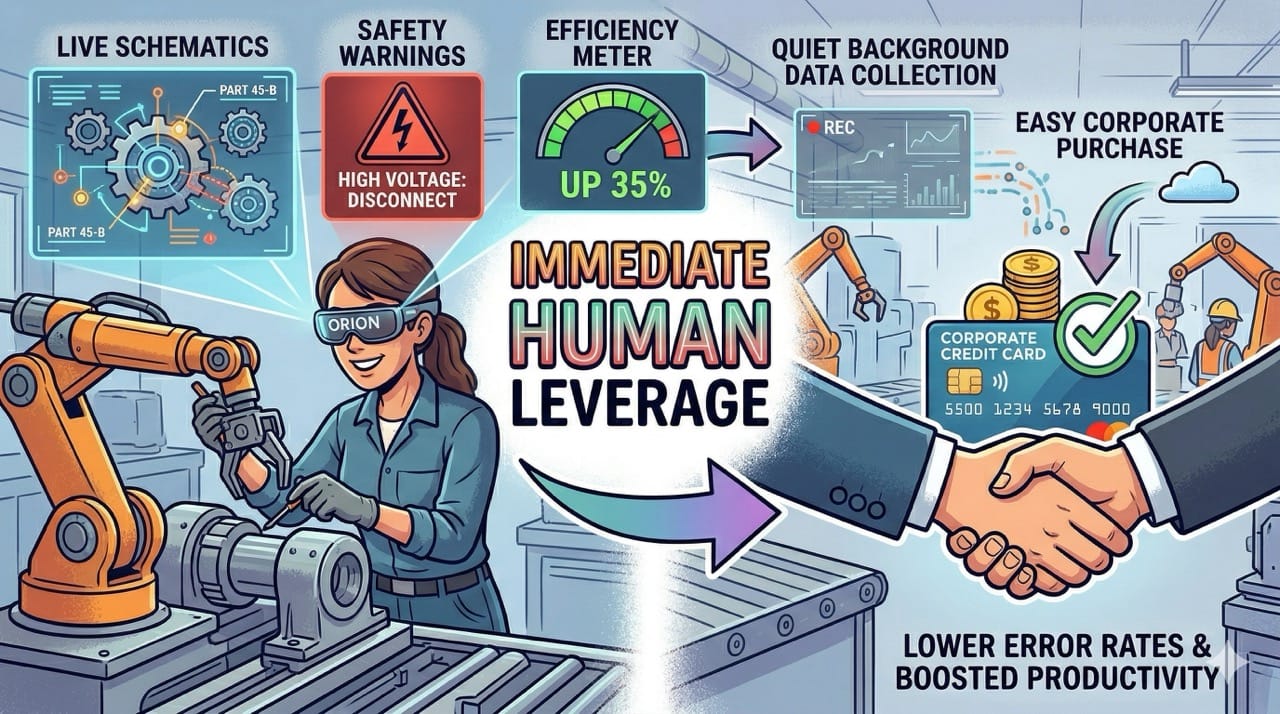

The genius of this strategy is that it provides massive immediate value to the human worker while quietly collecting data in the background.

The Orion glasses can project live schematics, safety warnings, and efficiency metrics directly into the worker's field of vision.

This instantly lowers the error rate and boosts human productivity, making the hardware an easy corporate purchase.

The Battery Stopgap

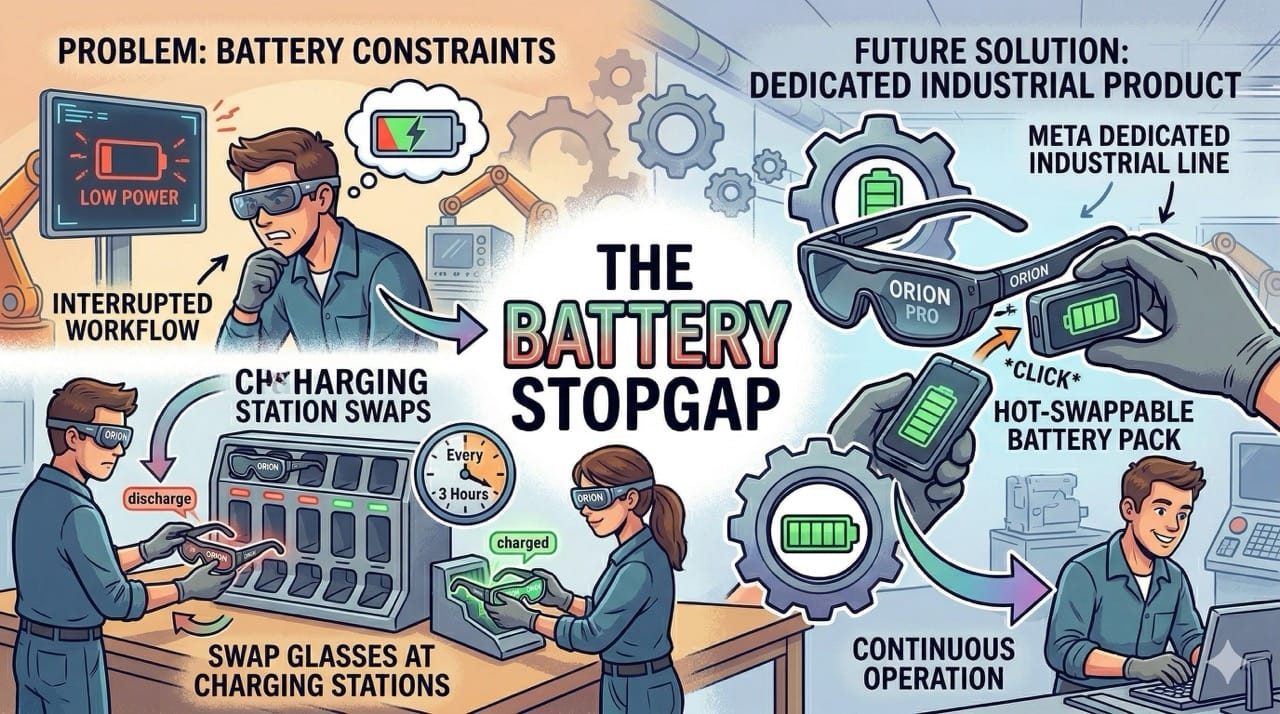

The current iteration of augmented reality glasses struggles with severe battery life constraints.

However, this is easily solved on a factory floor by simply having workers swap the glasses at charging stations every few hours.

Meta can easily manufacture a dedicated industrial product line specifically designed with hot-swappable battery packs to ensure continuous operation.

Perfect Labeling

Because a human is actively wearing the glasses and performing the physical task, the resulting data is completely flawless.

The human worker is essentially labeling the spatial data in real time as they complete their manufacturing quota.

This eliminates the massive secondary cost of paying human contractors to manually review and label millions of hours of raw video footage.

The Humanoid Handoff

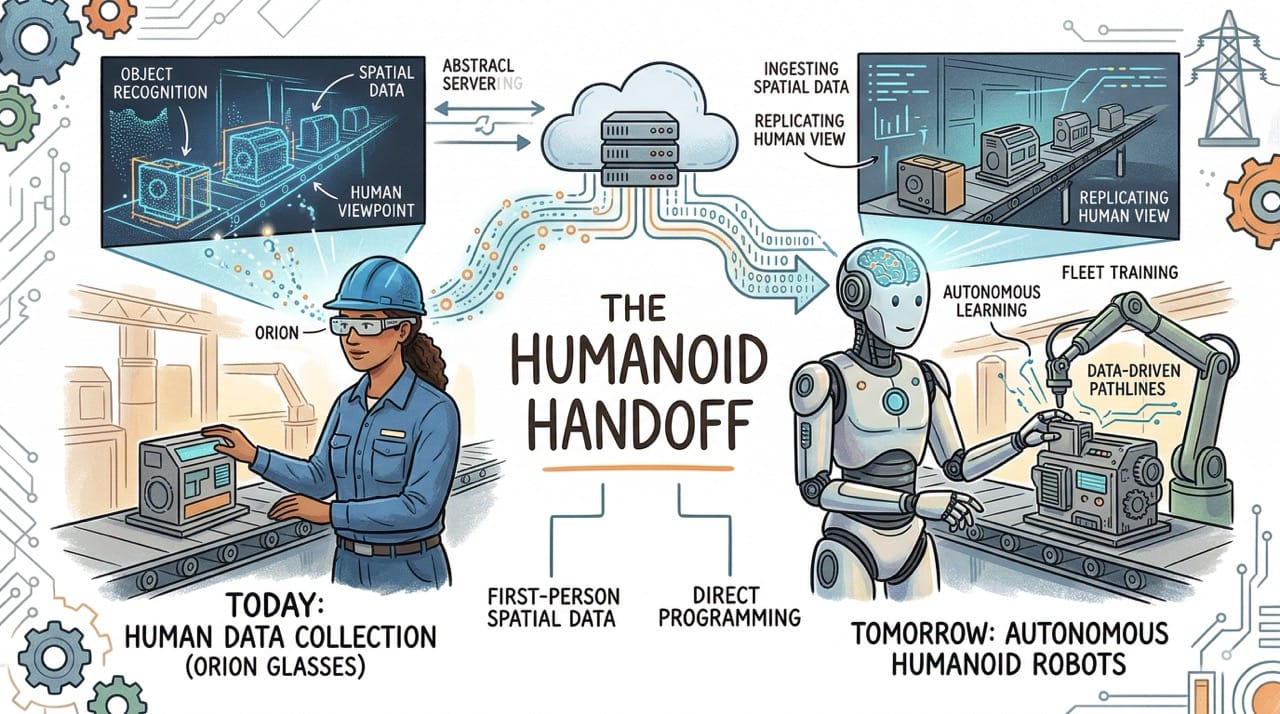

This specific, first-person viewpoint is exactly what is required to eventually train a fleet of humanoid robots.

A humanoid robot possesses a viewing angle nearly identical to a human wearing augmented reality glasses.

The spatial data collected by the Orion glasses today will directly program the autonomous robotic arms of tomorrow.

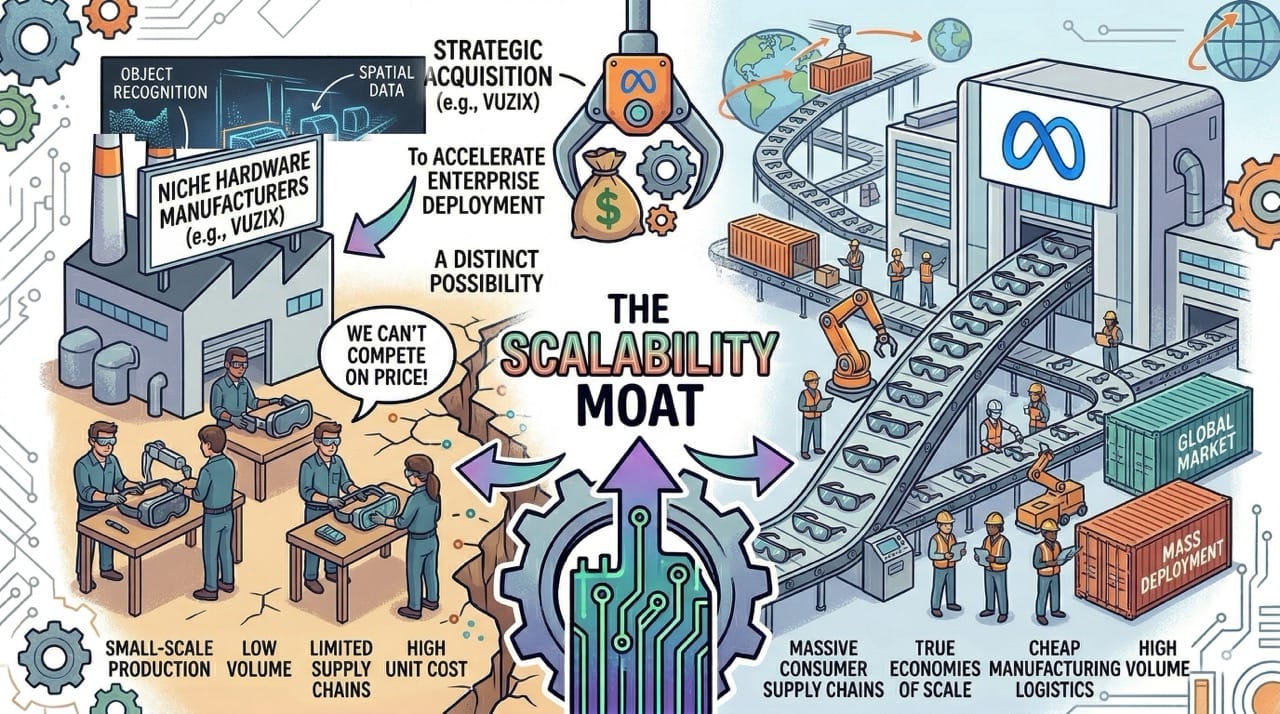

The Scalability Moat

Niche hardware manufacturers like Vuzix have attempted to build industrial augmented reality headsets for years.

However, they completely lack the massive consumer supply chains required to reach true economies of scale.

Meta is the only company with the hardware logistics to manufacture these glasses cheaply, though a strategic acquisition of Vuzix remains a distinct possibility to accelerate enterprise deployment.

The Google Threat

The only legitimate threat to Meta in this spatial data race is Google.

Google has deep hardware experience with their Pixel line, and their past experiments with Google Glass prove they understand the augmented reality form factor.

If Google pivots their massive artificial intelligence resources toward an enterprise headset, it will trigger an immediate arms race for control of the American factory floor.

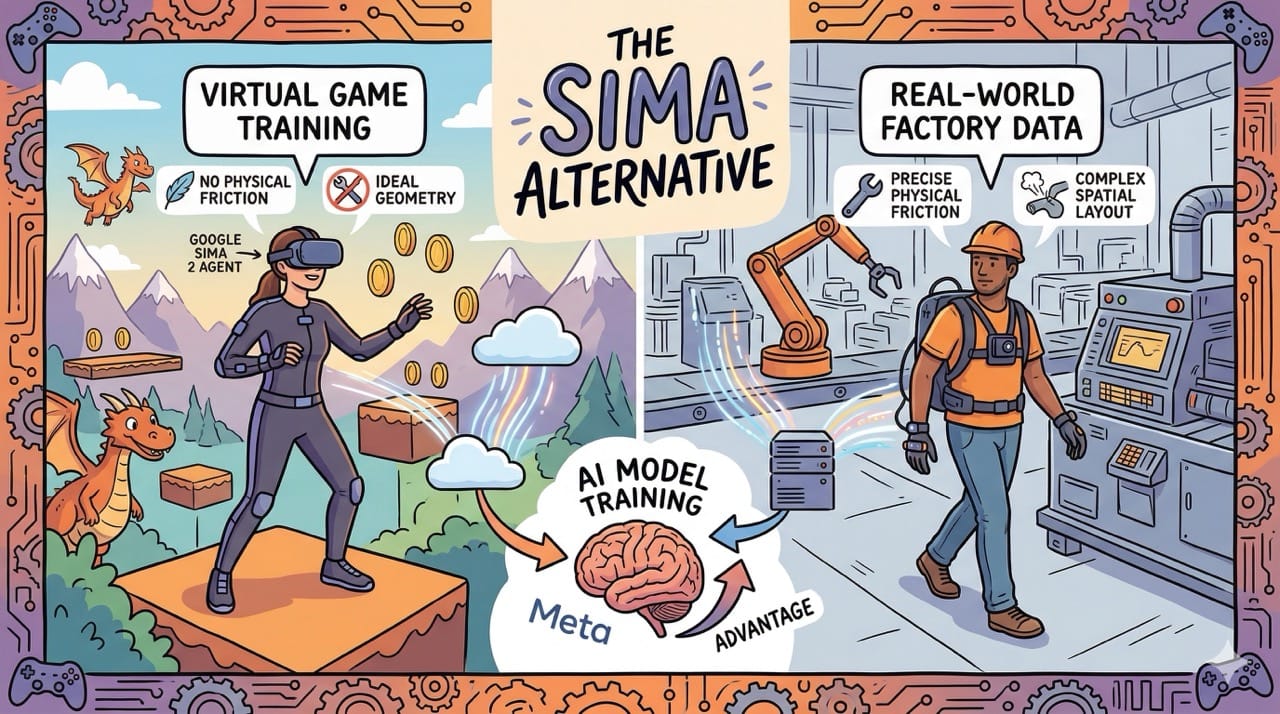

The SIMA Alternative

It is also worth noting how other models are attempting to solve this spatial deficit.

Google's SIMA 2 agent is taking a radically different approach by learning physical navigation entirely through playing 3D video games.

While innovative, video games still lack the precise physical friction of a factory floor, leaving Meta's real-world data collection strategy with a distinct advantage.

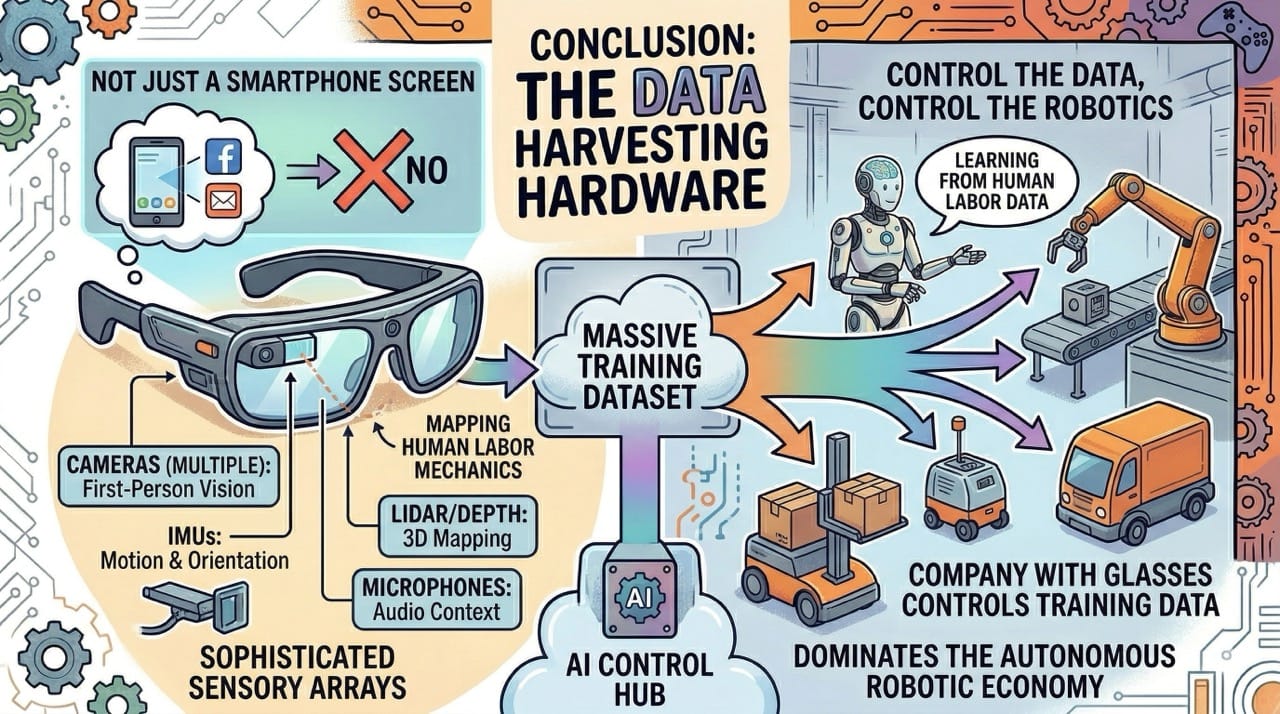

Conclusion: The Data Harvesting Hardware

Do not view augmented reality glasses simply as a replacement for the smartphone screen.

They are highly sophisticated sensory arrays designed to map the physical mechanics of human labor.

The company that controls the glasses will eventually control the training data for the entire autonomous robotic economy.