Embodied General Intelligence: The Final Frontier of Artificial Intelligence

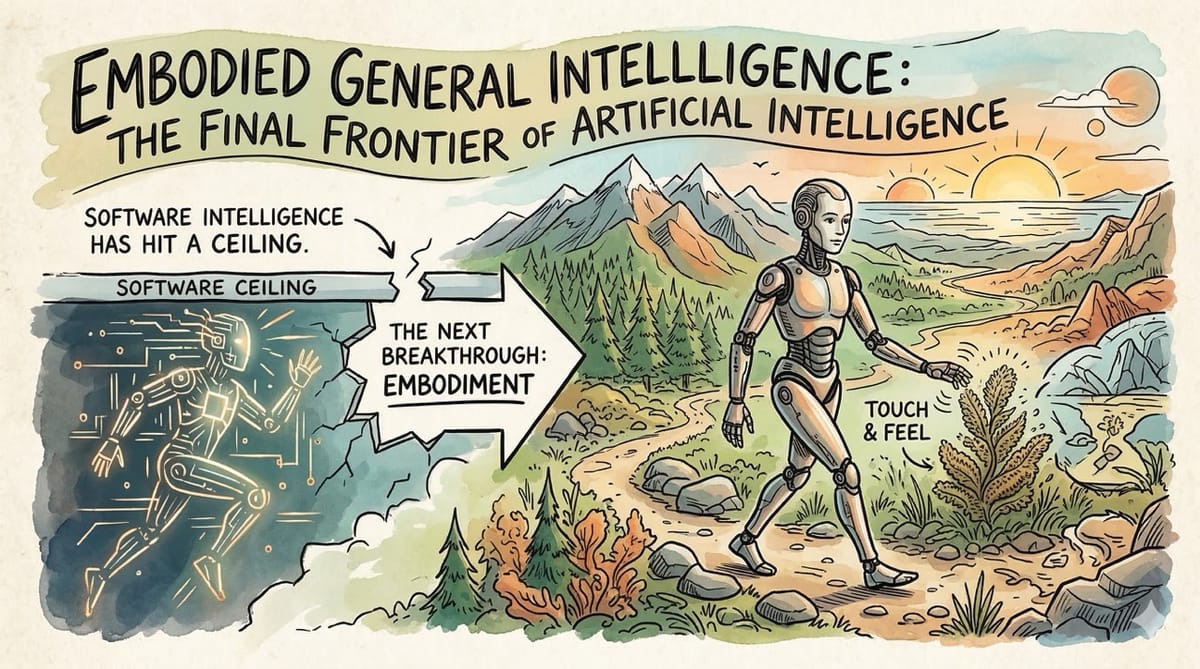

Large language models taught machines to think. Embodied general intelligence will teach them to exist. The race to build AI that can perceive, move, and physically interact with the real world is the most consequential technological pursuit of the next decade.

Software intelligence has hit a ceiling. The next breakthrough requires AI that can touch, feel, and navigate the physical world in real time.

Inspiration: Watching John Carmack demonstrate a robot learning to play a physical Atari game with a camera and a joystick. Then hearing him say that reality is not a turn-based game. Realizing that the entire AI industry is building minds without bodies, and that the body might be the missing piece.

We are living through an extraordinary moment in artificial intelligence.

Large language models can write essays, pass bar exams, and generate code that compiles on the first try.

But they cannot pick up a coffee cup.

They cannot navigate a crowded sidewalk.

They cannot catch a ball thrown at them from across a room.

This is the fundamental gap that embodied general intelligence is trying to close.

What Is Embodied General Intelligence

The simplest way to explain it is this.

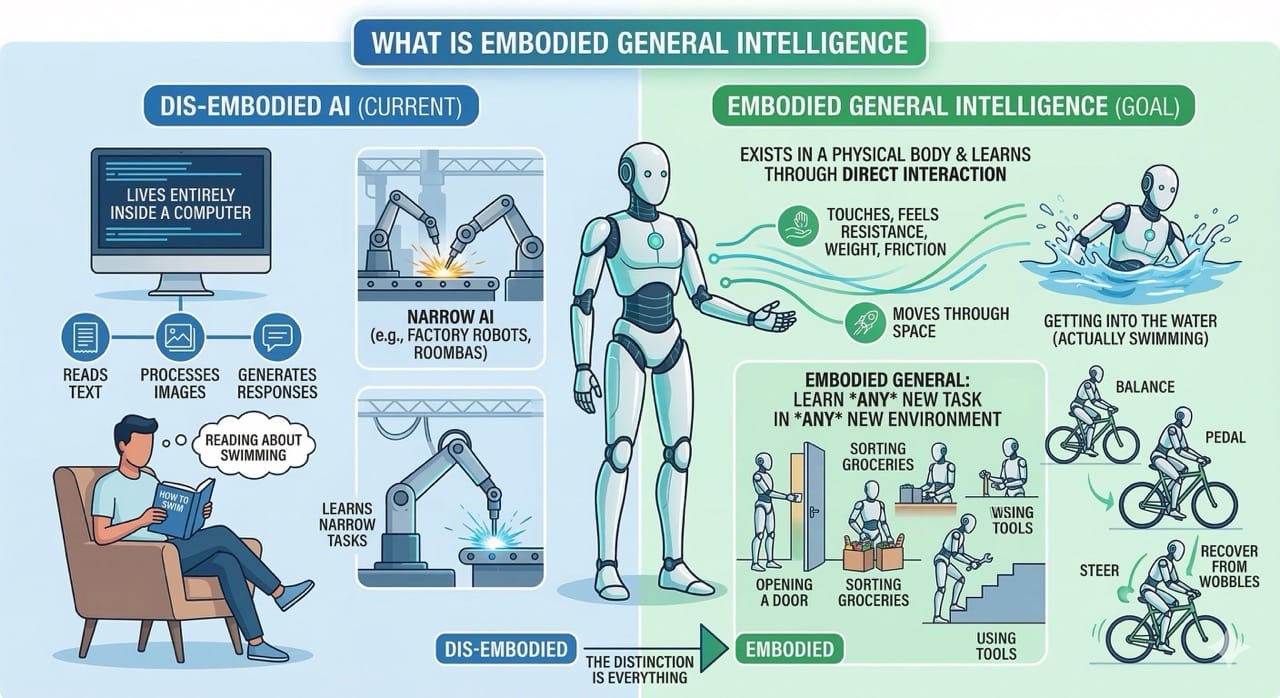

Current AI lives entirely inside a computer. It reads text, processes images, and generates responses. It never touches anything. It never moves through space. It never feels resistance, weight, or friction.

Embodied general intelligence is the pursuit of AI that exists in a physical body and learns through direct interaction with the real world.

Think of it as the difference between reading a book about swimming and actually getting into the water.

A disembodied AI can describe in perfect detail how to ride a bicycle. An embodied AI would need to actually balance, pedal, steer, and recover from wobbles in real time.

The "general" part is critical.

We already have narrow embodied AI. Factory robots that weld the same joint ten thousand times a day. Roombas that vacuum a floor using basic sensor logic.

Embodied general intelligence means a physical agent that can learn any new task in any new environment without being explicitly programmed for it.

That distinction is everything.

The Technical Architecture

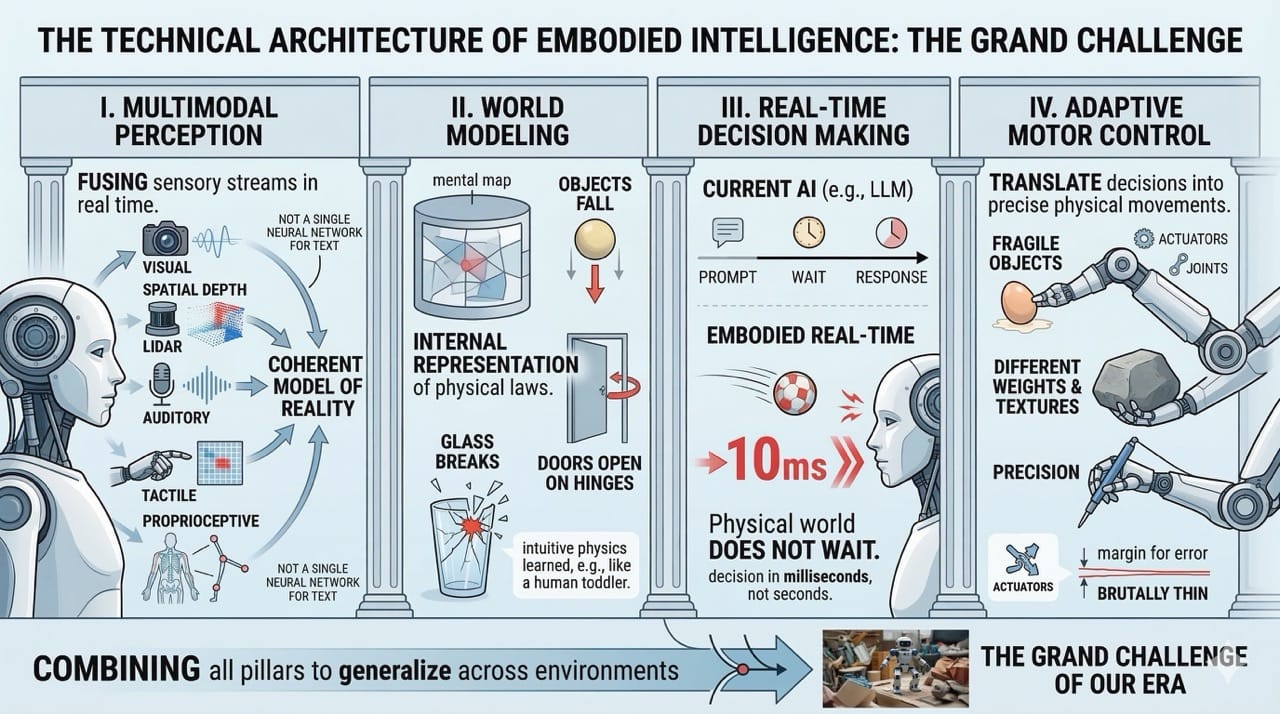

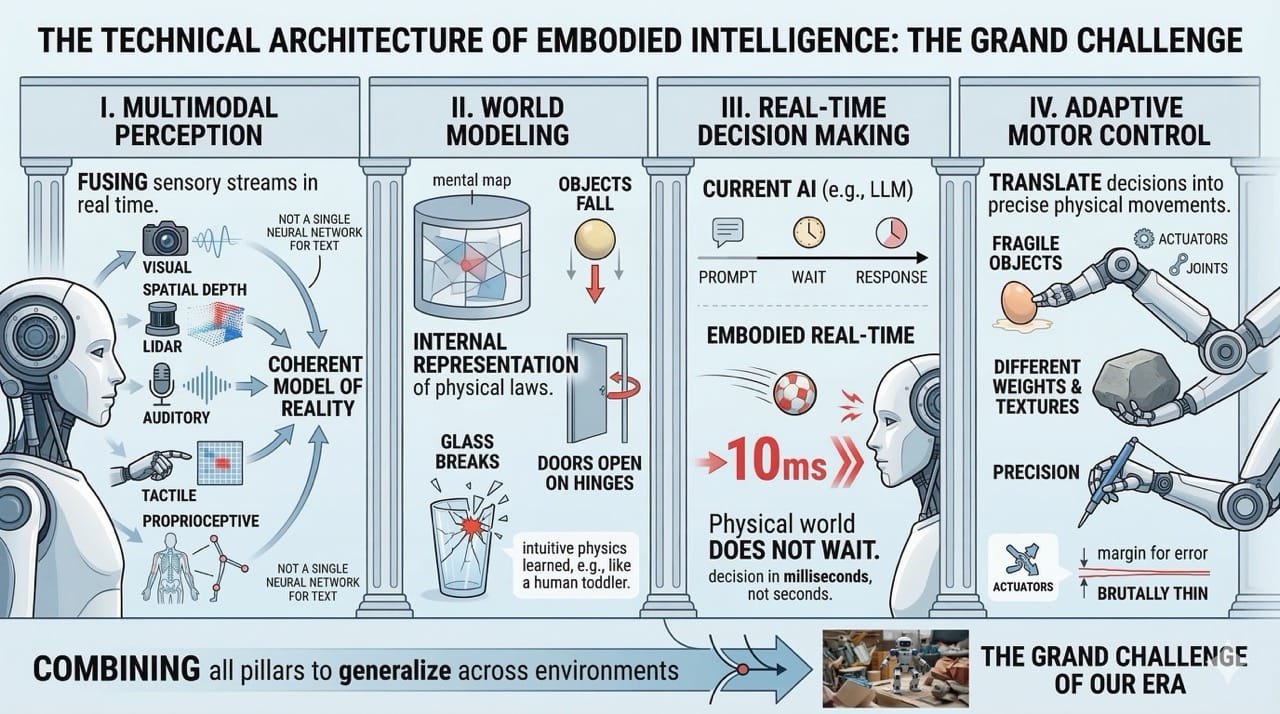

To understand why this is so difficult, you need to understand the four pillars that underpin embodied intelligence systems.

The first is multimodal perception.

An embodied agent must simultaneously process visual input, spatial depth, auditory signals, tactile feedback, and proprioceptive data about its own body position.

This is not a single neural network processing text.

It is dozens of sensory streams being fused into a coherent model of reality in real time.

The second is world modeling.

The agent needs an internal representation of how the physical world works.

It needs to understand that objects fall when released, that doors open on hinges, that glass breaks under force.

Current AI systems lack this intuitive physics that a human toddler develops by age two.

The third is real-time decision making.

This is where things break down for most current approaches. Large language models operate in turns. You send a prompt, you wait, you get a response.

The physical world does not wait.

A ball flying toward your head requires a decision in milliseconds, not seconds.

The fourth is adaptive motor control.

The system must translate decisions into precise physical movements across joints, actuators, and limbs.

Every surface is different.

Every object has a different weight, texture, and fragility.

The margin for error in the physical world is brutally thin.

Combining all four of these pillars into a single system that generalizes across environments is the grand challenge of our era.

Where We Are Right Now

The honest answer is early. Very early.

We have made extraordinary progress in individual components.

Vision models can now identify objects with superhuman accuracy.

Language models can reason about tasks and generate step-by-step plans.

Reinforcement learning agents can master complex games and simulations.

But integrating all of these into a physical body that operates reliably in unpredictable environments remains an unsolved problem.

The robotics industry is making real strides. Tesla's Optimus, Boston Dynamics' Atlas, Agility Robotics' Digit, and Unitree's humanoids are all demonstrating increasingly capable physical behavior.

NVIDIA has built an entire platform around what it calls "Physical AI," including the Jetson Thor chip that delivers server-grade AI compute directly onboard a robot.

Companies like Generalist AI have introduced GEN-0, a new class of embodied foundation models trained directly on real-world physical interaction data spanning millions of diverse manipulation tasks.

Research publications on embodied AI in fields like healthcare alone have increased nearly sevenfold between 2019 and 2024.

The momentum is real. But we are still closer to the beginning than the end.

A useful reality check from John Carmack puts it perfectly.

Ask your dancing humanoid robot to pick up a joystick and learn how to play an obscure video game. That is when you will know how close we actually are.

The Carmack Thesis

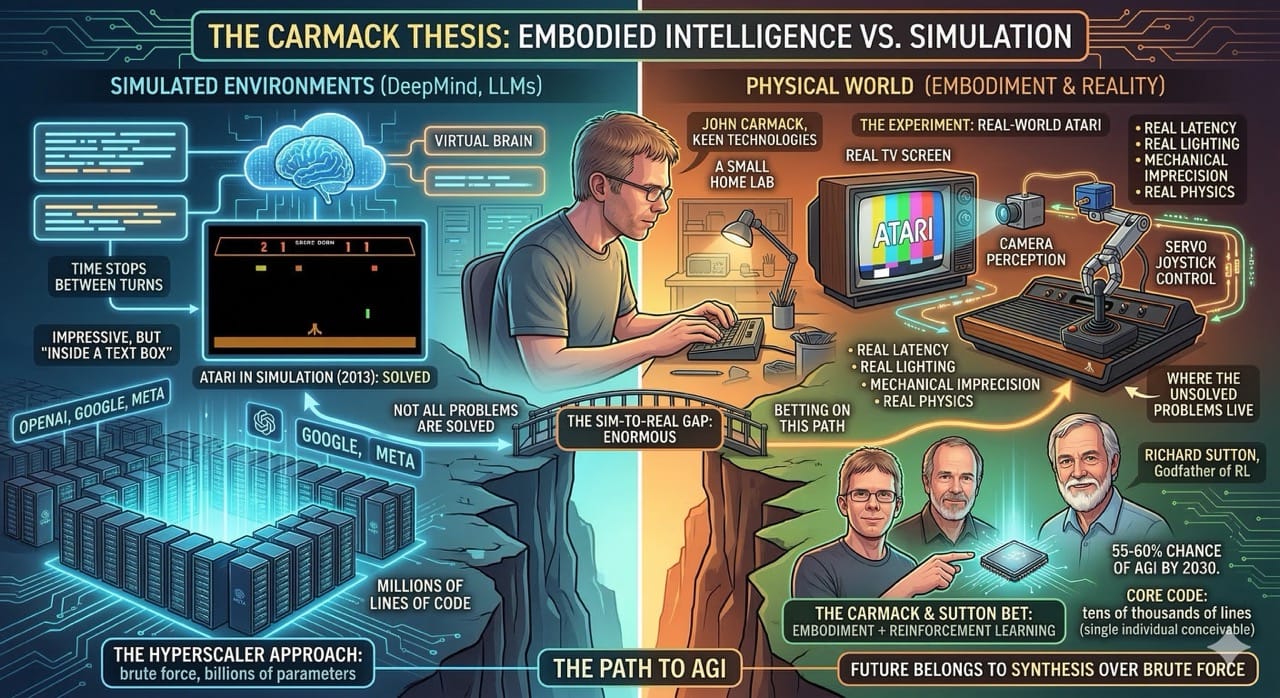

John Carmack is one of the most important voices in this entire conversation.

He co-founded id Software. He built the engines behind Doom and Quake. He served as CTO of Oculus and helped pioneer virtual reality at Meta.

In 2022, he left all of that behind to start Keen Technologies, a small AGI research lab operating out of his home in Dallas.

His thesis is simple and provocative.

Carmack believes that modern AI research has become too comfortable in simulated, turn-based environments.

Large language models are impressive. But they operate in a world where time stops between each interaction.

Reality does not work that way.

Carmack's argument is that true intelligence requires continuous interaction with the physical world. You cannot think your way to general intelligence from inside a text box.

To prove this, his team at Keen built a system where a robot uses a camera pointed at a physical TV screen and a servo motor connected to a real joystick to learn how to play Atari games.

This might sound trivial. DeepMind solved Atari games in simulation back in 2013.

But Carmack's version is fundamentally different.

His robot deals with real latency, real lighting changes, real mechanical imprecision, and real physics. The sim-to-real gap is enormous, and that gap is exactly where the unsolved problems live.

Carmack has also partnered with Richard Sutton, widely considered the godfather of reinforcement learning, who serves as a key collaborator at Keen.

Together, their bet is that the path to AGI runs through embodiment and reinforcement learning, not through scaling language models to ever-larger parameter counts.

Carmack has publicly stated that he believes there is a 55 to 60 percent chance that signs of AGI will emerge by 2030.

He also believes the core code for AGI could be tens of thousands of lines, not millions. Meaning a single brilliant individual could conceivably write it.

This is a radically different view from the billions-of-dollars, hundreds-of-thousands-of-GPUs approach taken by OpenAI, Google, and Meta.

It may be wrong. But if Carmack is even partially right, it means the biggest breakthrough in AI history could come from a small team, not a hyperscaler.

As I explored in The Ultimate Orchestrator, the future increasingly belongs to individuals who can synthesize across domains rather than armies that scale brute force.

The Implications

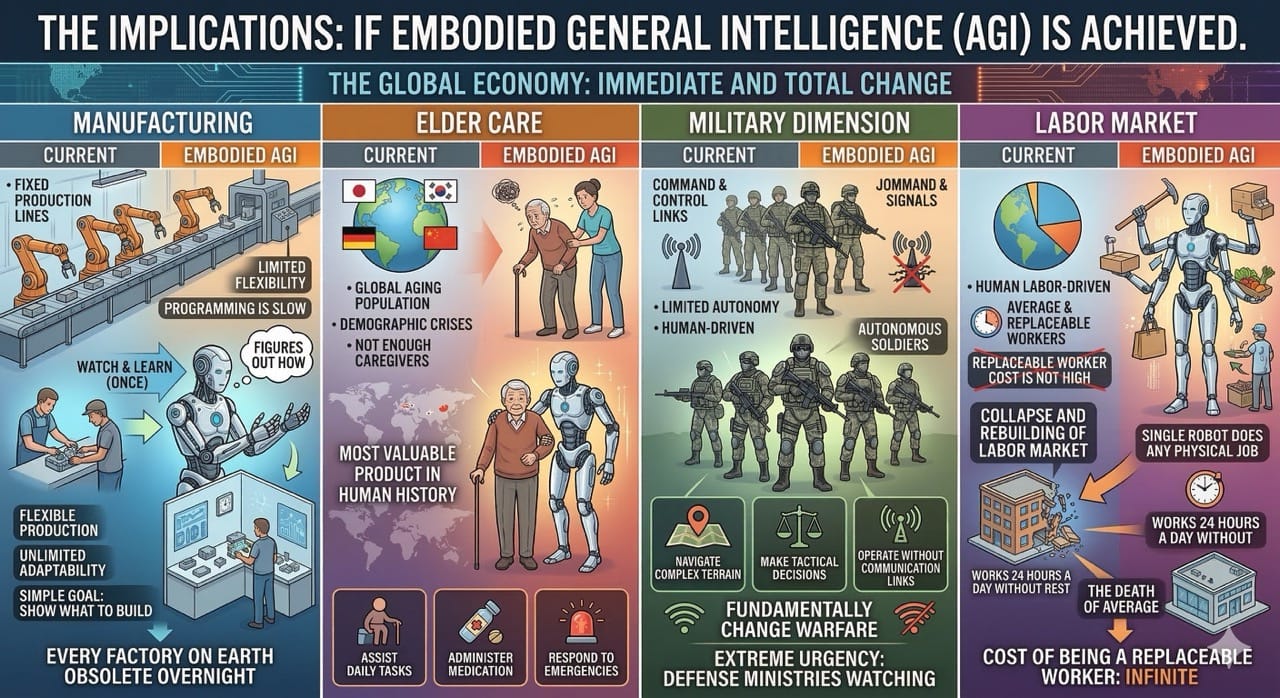

If embodied general intelligence is achieved, the effects on the global economy will be immediate and total.

Start with manufacturing.

A generally intelligent robot that can learn any assembly task by watching a human do it once would make every factory on Earth obsolete overnight.

The concept of a fixed production line disappears. You simply show the machine what to build, and it figures out how.

Then consider elder care.

The world is aging rapidly. Japan, South Korea, Germany, and China are all facing demographic crises with not enough young workers to care for growing elderly populations.

A physically capable, generally intelligent robot that can assist with daily tasks, administer medication, and respond to emergencies would be the most valuable product in human history.

Then there is the military dimension.

Autonomous soldiers that can navigate complex terrain, make tactical decisions, and operate without communication links would fundamentally change the nature of warfare.

Every defense ministry on the planet is watching this space with extreme urgency.

The labor implications alone are staggering.

If a single robot can do any physical job a human can do, and it works 24 hours a day without rest, the entire structure of the global labor market collapses and rebuilds around a new reality.

This connects to the themes I discussed in The Death of Average. The cost of being a replaceable worker will not just be high. It will be infinite.

Additional Thoughts

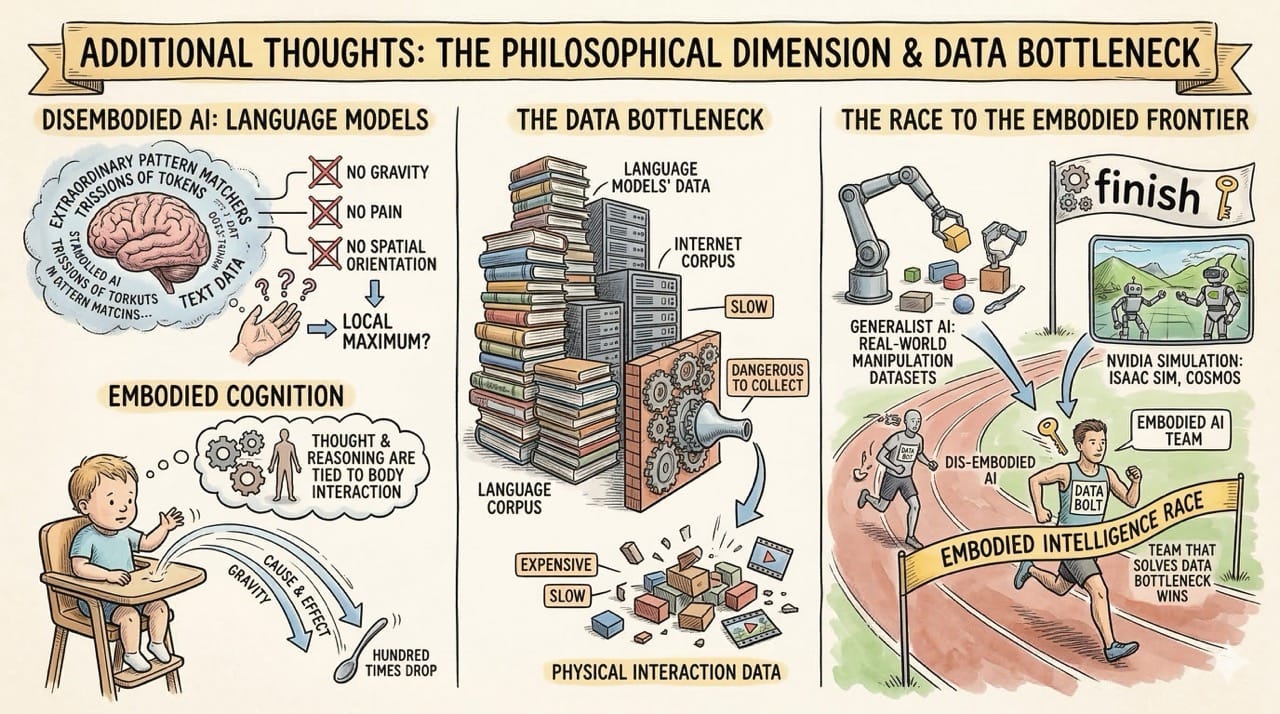

There is a philosophical dimension here that the technical community tends to avoid.

If intelligence truly requires a body, as embodied cognition theory suggests, then we have been building the wrong kind of AI for the last decade.

Language models are extraordinary pattern matchers. But they have no sense of gravity, no understanding of pain, no concept of spatial orientation.

A child learns cause and effect by dropping a spoon from a high chair a hundred times. No amount of text data replicates that experience.

The embodied cognition perspective argues that thinking and reasoning are not separate from the body.

They emerge from the body's interaction with its environment.

If this is correct, then the current approach of building ever-larger disembodied language models may be a local maximum, not the path to general intelligence.

This is exactly what Carmack is betting on.

There is also a data problem worth noting.

Language models benefit from the entire internet as a training corpus. Trillions of tokens of human-generated text.

Embodied AI has no equivalent dataset. Physical interaction data is expensive, slow, and dangerous to collect.

This is why companies like Generalist AI are investing heavily in building the largest real-world manipulation datasets ever assembled, and why NVIDIA's simulation platforms like Isaac Sim and Cosmos are so critical.

The team that solves the data bottleneck for physical AI will likely be the team that wins the embodied intelligence race.

Predictions

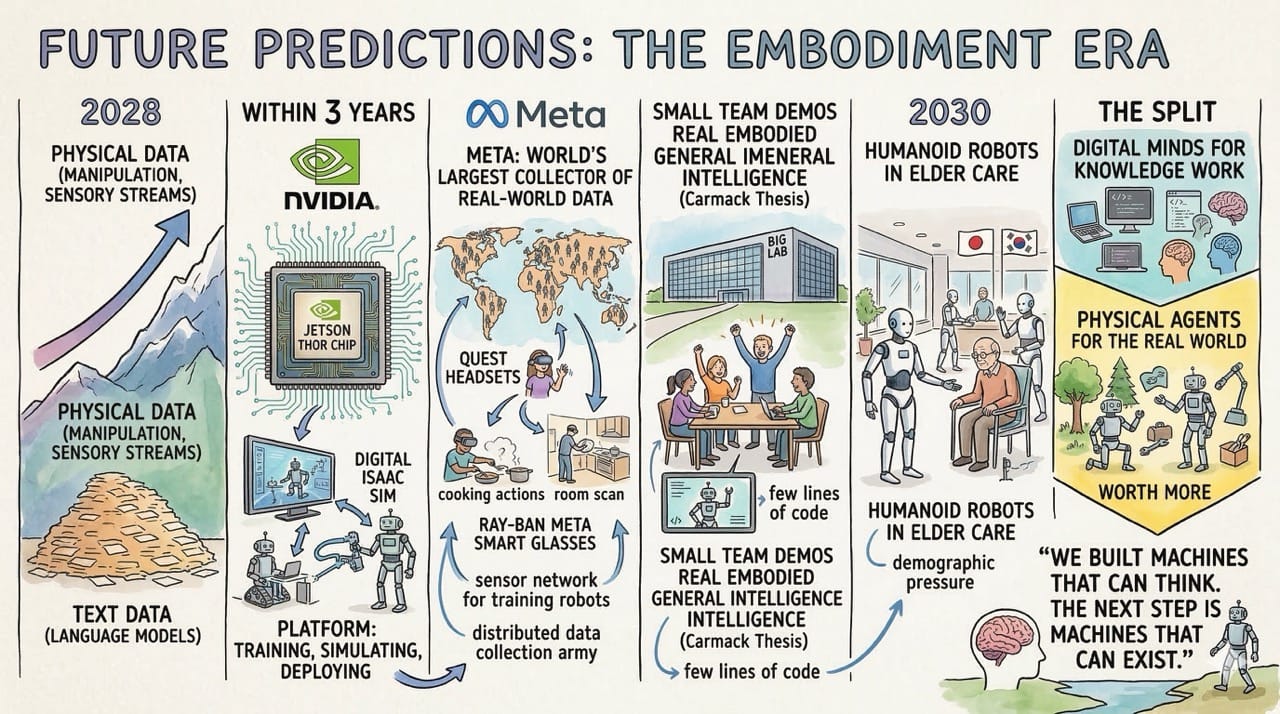

Embodied foundation models will overtake language models as the main focus of AI research by 2028. The scaling-text era will plateau. The next wave comes from physical data.

NVIDIA will become the most important company in robotics within three years. Their full-stack platform for training, simulating, and deploying robots is already the industry default.

Meta will become the largest collector of real-world physical interaction data on the planet.

This is the angle almost nobody is talking about.

Quest headsets track your hands, your body, and your entire room in three dimensions. Ray-Ban Meta smart glasses record first-person video of how humans interact with the physical world all day long.

That is exactly the data embodied AI is starving for.

Every time someone cooks a meal wearing Ray-Bans, picks up a tool in mixed reality, or navigates a crowded street with their glasses on, Meta is potentially collecting the kind of training data that no robotics lab can generate at scale.

They already made wearables socially acceptable. The next step is turning millions of willing users into a distributed data collection army for physical AI. The headset is not just a consumer product. It is a sensor network for training robots.

A small team will demo real embodied general intelligence before a big lab does. Carmack's thesis is not just optimism. It reflects a genuine architectural insight about how few lines of code the core might require.

Humanoid robots will be working in elder care facilities by 2030. Japan and South Korea will lead. The demographic pressure is too urgent to wait.

Embodied intelligence will split AI into two industries. One builds digital minds for knowledge work. The other builds physical agents for the real world. The second will be worth more.

We built machines that can think.

The next step is machines that can exist.